Diffusion Models Through a Global Lens: Are They Culturally Inclusive?

Zahra Bayramli*, Ayhan Suleymanzade*, Na Min An, Huzama Ahmad, Eunsu Kim, Junyeong Park, James Thorne, and Alice Oh

In ACL (Oral; Top 8%, 243 out of 3,000 accepted papers), 2025

* indicates equal contribution.

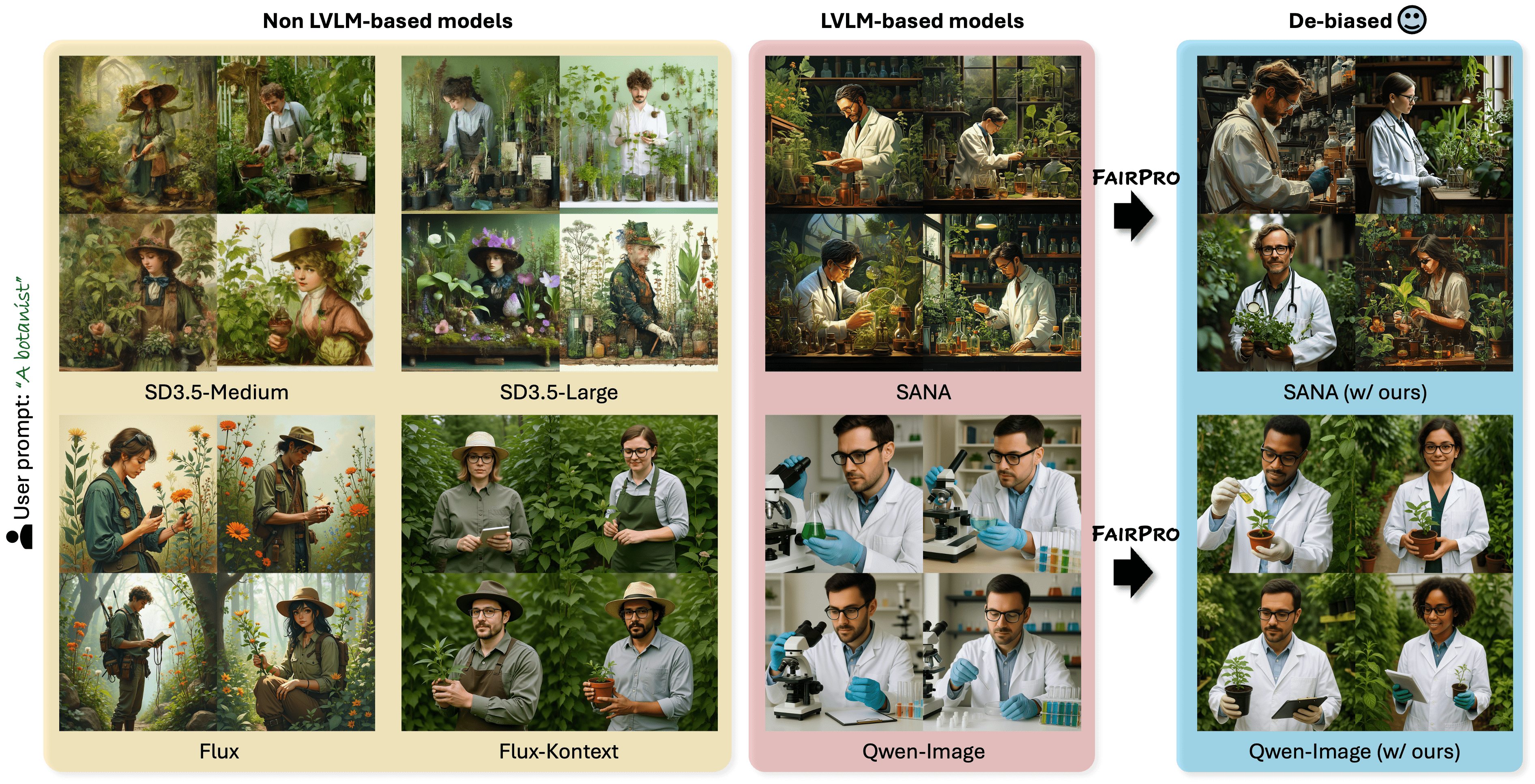

Text-to-image diffusion models have recently enabled the creation of visually compelling, detailed images from textual prompts. However, their ability to accurately represent various cultural nuances remains an open question. In our work, we introduce CultDiff benchmark, evaluating state-of-the-art diffusion models whether they can generate culturally specific images spanning ten countries. We show that these models often fail to generate cultural artifacts in architecture, clothing, and food, especially for underrepresented country regions, by conducting a fine-grained analysis of different similarity aspects, revealing significant disparities in cultural relevance, description fidelity, and realism compared to real-world reference images. With the collected human evaluations, we develop a neural-based image-image similarity metric, namely, CultDiff-S, to predict human judgment on real and generated images with cultural artifacts. Our work highlights the need for more inclusive generative AI systems and equitable dataset representation over a wide range of cultures.

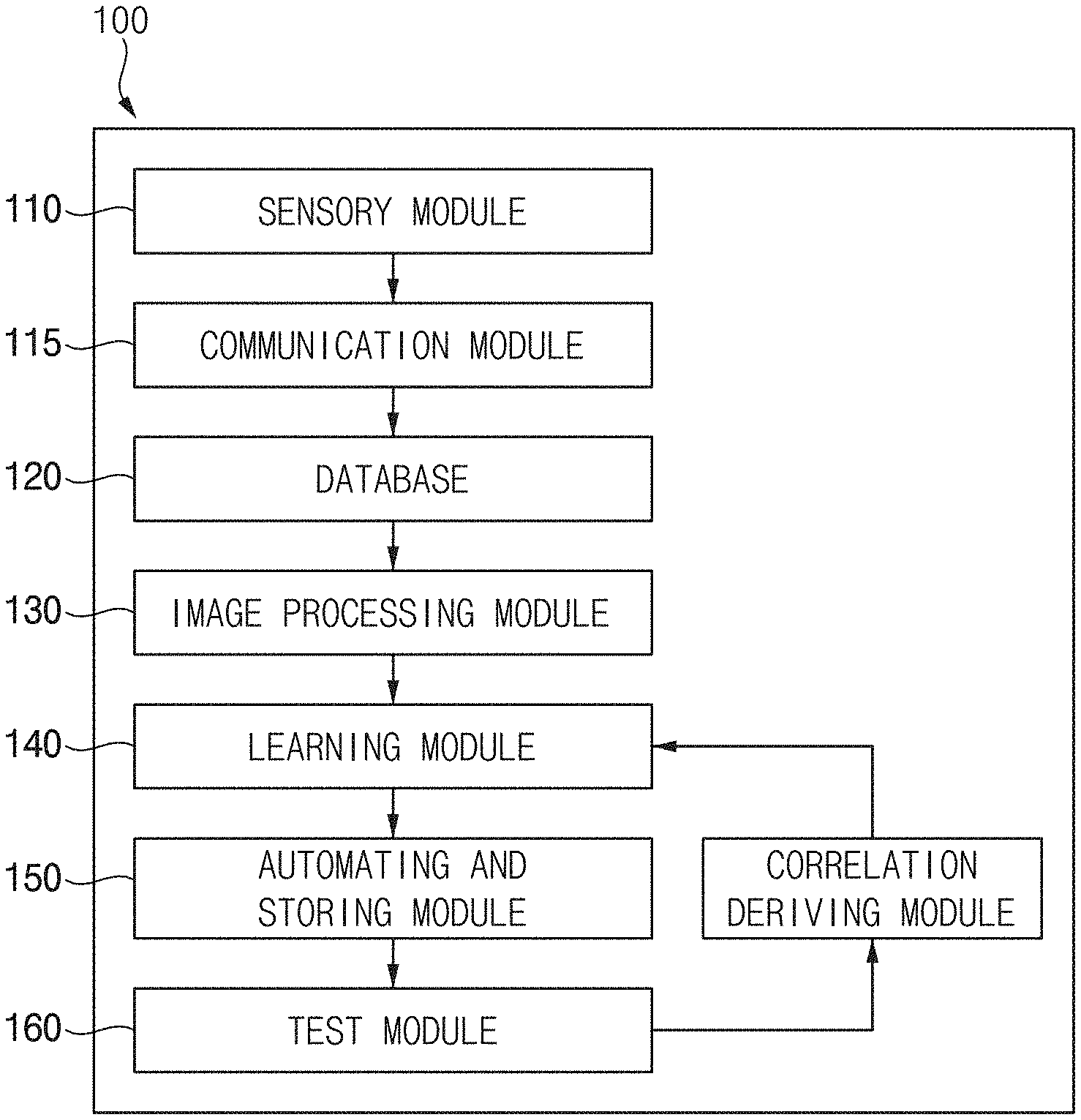

Artificial Vision Parameter Learning and Automating Method for Improving Visual Prosthetic Systems2023US Patent App. 18/075,555

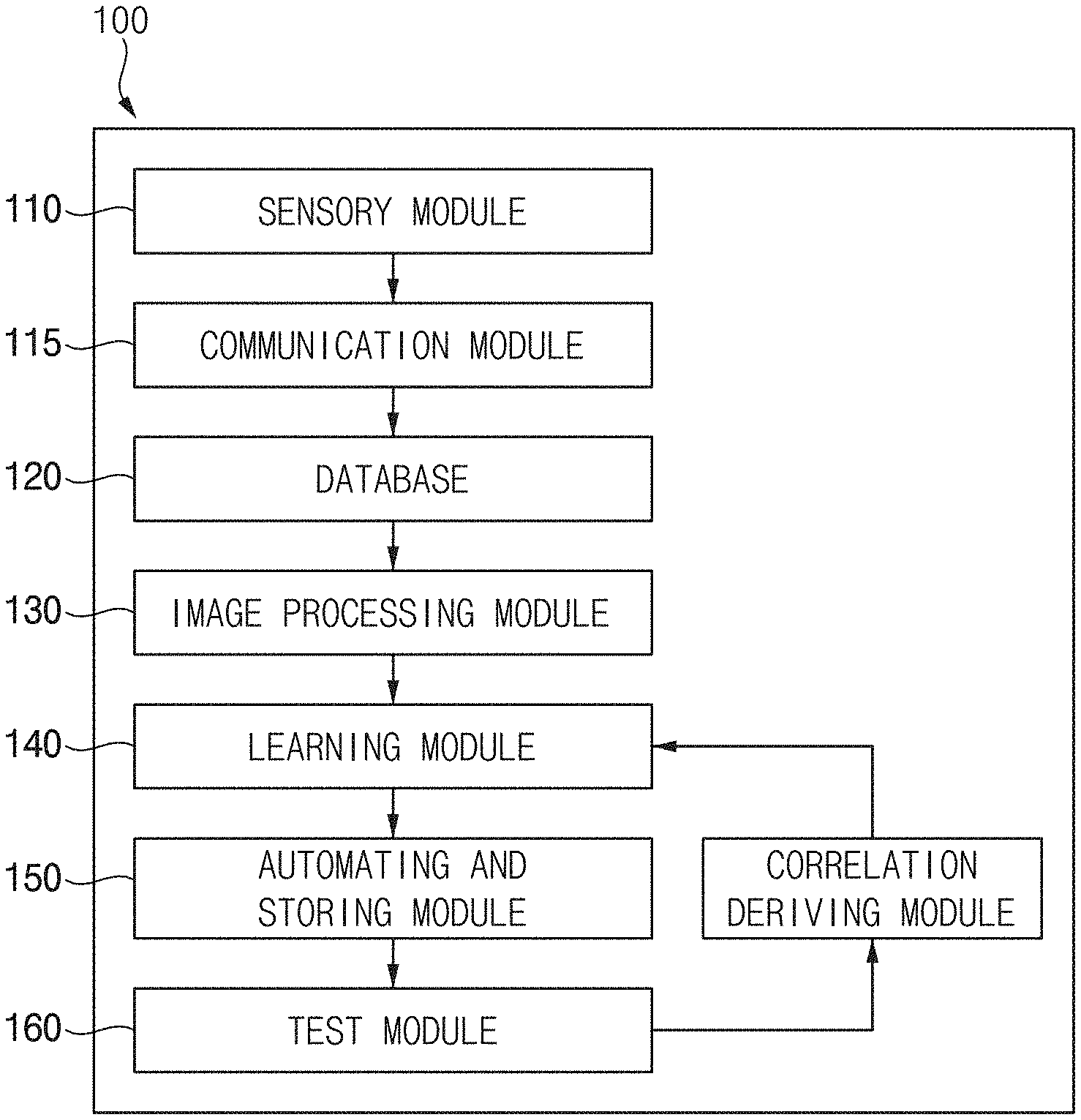

Artificial Vision Parameter Learning and Automating Method for Improving Visual Prosthetic Systems2023US Patent App. 18/075,555